120/69 Friday, February 27, 2026

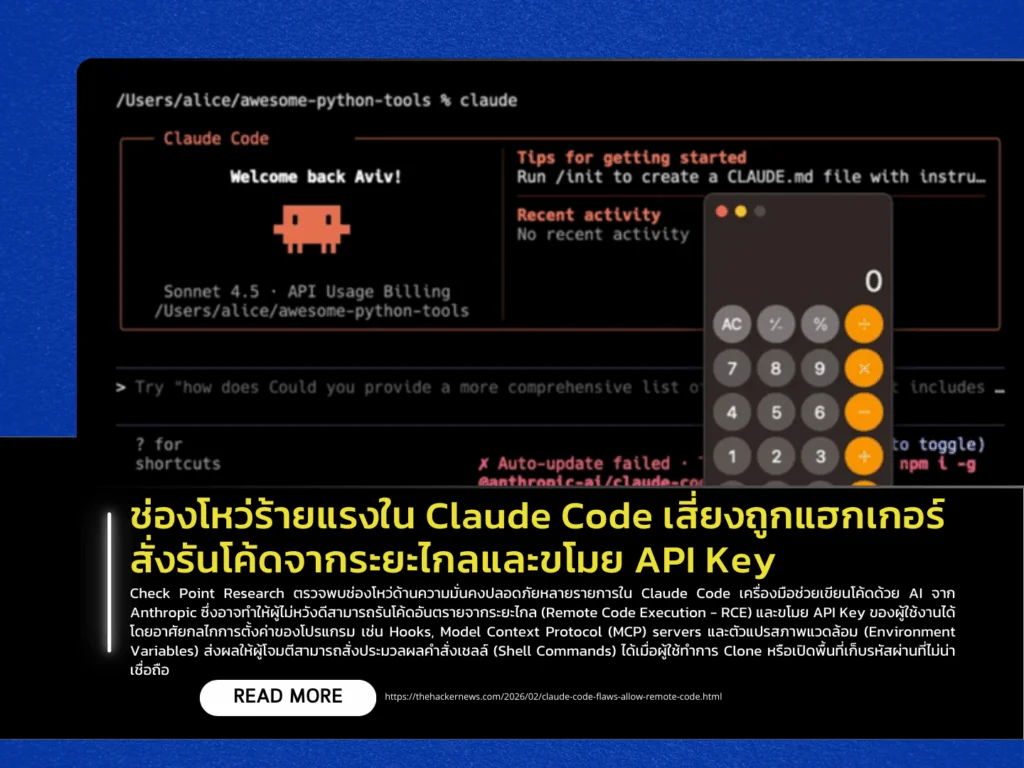

Check Point Research has identified multiple security vulnerabilities in Claude Code, an AI-powered coding assistant developed by Anthropic. The flaws could allow attackers to execute malicious code remotely (Remote Code Execution – RCE) and steal users’ API keys. The issues stem from configuration mechanisms within the tool, including Hooks, Model Context Protocol (MCP) servers, and environment variables. Under certain conditions, attackers could trigger shell command execution when a user clones or opens an untrusted repository.

Three major vulnerabilities were disclosed. The first involves a Consent Bypass via the .claude/settings.json file (CVSS: 8.7). The second, tracked as CVE-2025-59536 (CVSS: 8.7), could enable automatic shell command execution through malicious MCP configuration. The third, CVE-2026-21852 (CVSS: 5.3), is an information disclosure vulnerability. If a user opens a project configured with a manipulated ANTHROPIC_BASE_URL pointing to an attacker-controlled server, Claude Code may immediately send API requests-including the user’s API key-to that server before displaying a trust warning.

Researchers noted that this case reflects a shift in the modern threat model: the risk is no longer limited to executing untrusted code but extends to simply opening untrusted projects. Anthropic has released patches addressing all reported vulnerabilities in Claude Code version 2.0.65. Developers are strongly advised to update to the latest version immediately and exercise caution when opening projects from unknown or untrusted sources to prevent compromise of organizational AI infrastructure.

Source https://thehackernews.com/2026/02/claude-code-flaws-allow-remote-code.html